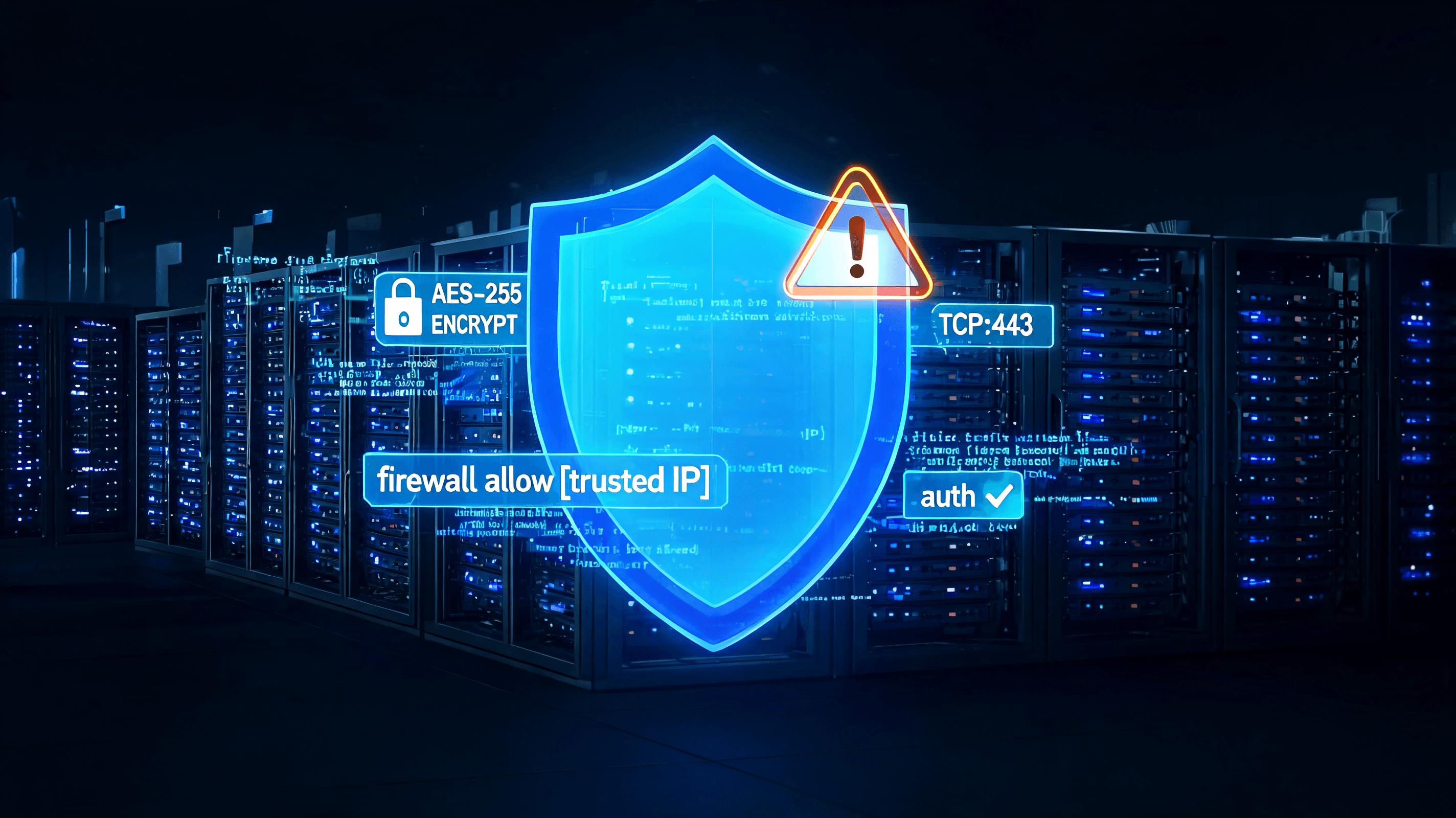

Record-Breaking Patch Tuesday: Microsoft Fixes 200 Security Vulnerabilities

Microsoft released record-breaking updates in June 2026 to fix over 200 vulnerabilities. Experts credit AI for accelerating vulnerability discovery and urge enterprises to prioritize automated patching.