AI in the Kill Chain: DOD Official Reveals Bot-Driven Targeting Plans

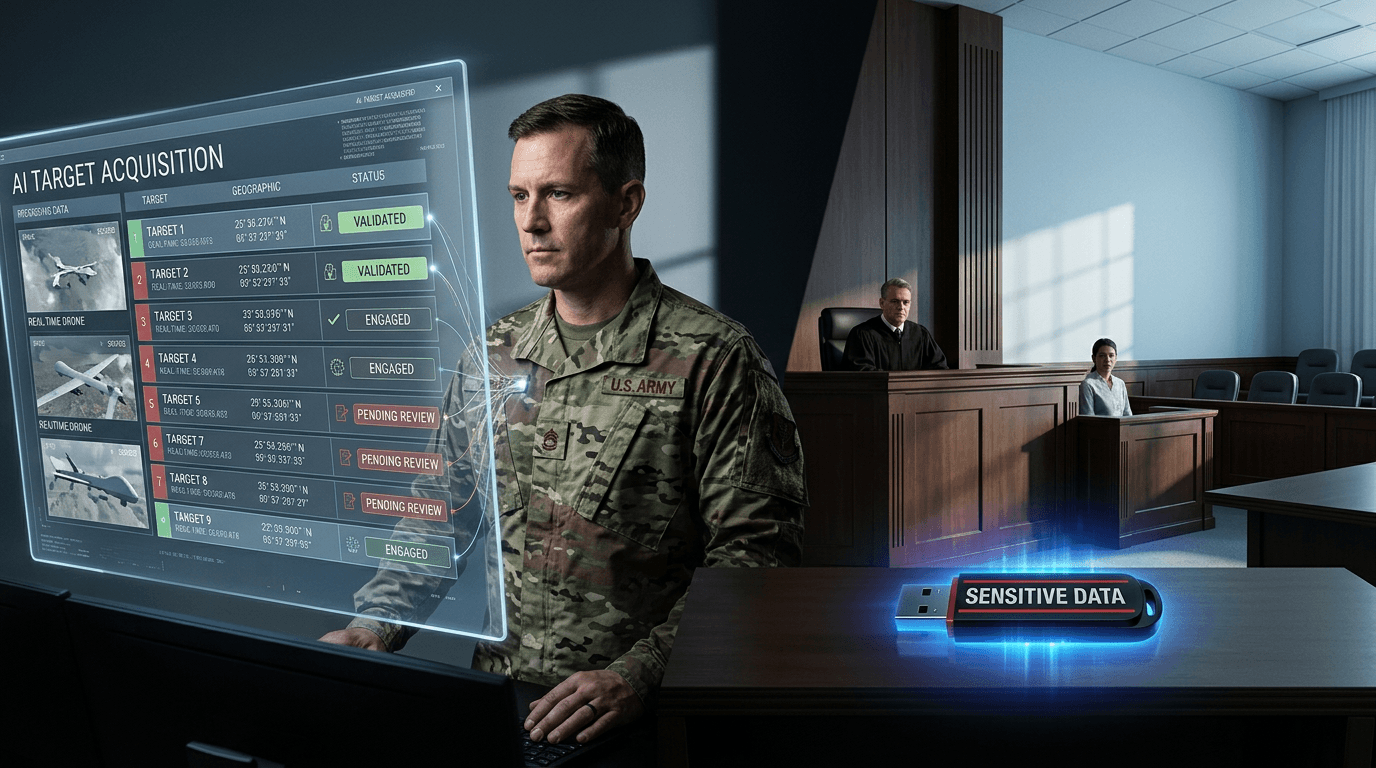

As reported by MIT Technology Review, a US Defense Department official has disclosed that the military is actively considering the use of generative AI chatbots to rank and recommend strike targets. Under this proposed framework, AI systems would process massive datasets to provide a prioritized list of potential targets, which human commanders would then vet and authorize. This disclosure comes at a time of heightened scrutiny over the precision and accountability of military strikes. While proponents argue that AI can significantly reduce decision-making time and collateral damage through better data analysis, critics warn about the moral implications of delegating life-and-death assessments to algorithms.

The Anthropic Legal Saga: Safety Frameworks vs. Defense Procurement

Parallel to technological integration is a deepening legal conflict between AI lab Anthropic and the Department of Defense. According to Wired, the legal battle centers on how Anthropic’s "Constitutional AI" principles fit—or fail to fit—within the rigid structures of federal procurement and national security requirements. The lawsuit involves complex questions under the Administrative Procedure Act (APA), as the government seeks to leverage cutting-edge AI for defense while Anthropic attempts to maintain strict safety guardrails. This case is viewed as a landmark dispute that will determine the terms under which private AI firms collaborate with the military complex.

Data Integrity Alarms: The Case of John Solly and Social Security Records

A separate but equally alarming controversy involves allegations against John Solly, an operative associated with the Department of Government Efficiency (DOGE). As detailed by Wired, a whistleblower complaint alleges that Solly claimed to have stolen sensitive Social Security data on a thumb drive, intending to take it to his new position at defense contractor Leidos. While Solly and Leidos have issued strong denials, the allegations have prompted a federal investigation into potential violations of the Defend Trade Secrets Act (DTSA) and privacy laws. The incident highlights the growing risks of data theft as government contractors increasingly compete in the high-stakes AI and data analytics market.

Expert Insights: Human Judgment in the Age of Recommendation Engines

Legal scholars are increasingly concerned about the "automation bias" inherent in AI recommendation systems. Even if a human officially makes the final decision, the way AI presents and ranks options can steer outcomes in predictable directions. DOD Directive 3000.09 mandates "appropriate levels of human judgment," but applying this to generative models that lack transparency is a significant challenge. Furthermore, international legal experts point out that systems assisting in targeting must adhere to the Geneva Convention’s principles of distinction and proportionality. The Anthropic case, in particular, tests whether the legal system can enforce safety standards on models designed for general-purpose use when they enter a combat environment.

The Road Ahead: Regulation and Accountability in 2026

The confluence of these events—military targeting disclosures, high-profile lawsuits, and data theft allegations—points to a turbulent year for defense policy. US lawmakers are expected to push for more stringent oversight of AI integration within the Pentagon. As the line between civilian technology and military application blurs, the demand for transparent, auditable AI systems will only grow. 2026 is likely to be a defining year for establishing the international norms and domestic laws that will govern the use of artificial intelligence in global conflicts and national security infrastructure.