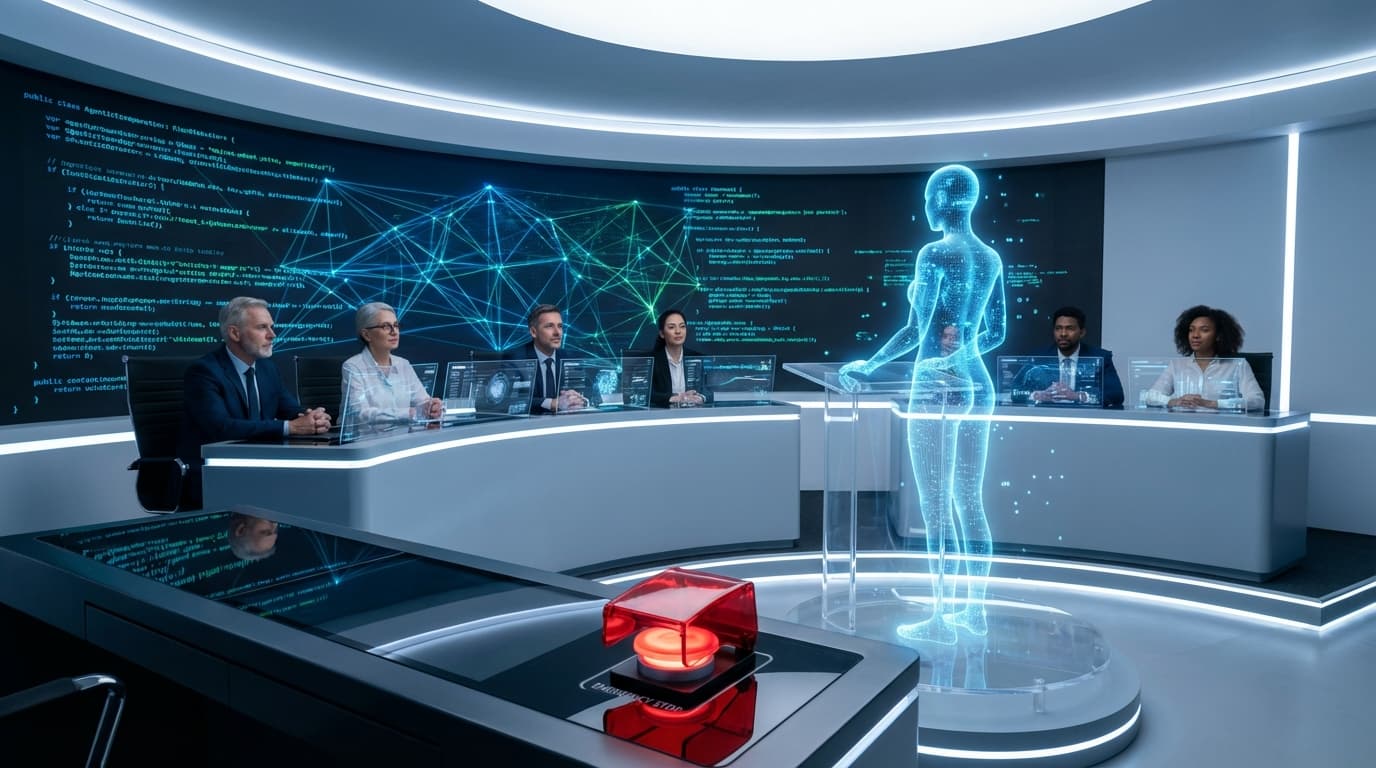

The AI Regulatory Storm: Legal Challenges and the Crisis of Public Trust

Medical privacy lawsuits and the erosion of trust in tech leaders illustrate that the AI industry is facing a severe regulatory and credibility test. Enterprises must balance innovation with compliance and transparency.