Executive Summary

The rapid proliferation of "Agentic AI"—models capable of autonomous action and tool use—has triggered a watershed moment in global regulation. The newly published "AI Safety Executive Order 2026" and the ensuing Global AI Safety Accord mark a definitive shift from regulating AI-generated content to regulating AI-driven behavior. For the first time, a legal precedent for "Agentic Liability" is being established, redistributing the burden of responsibility from users to developers for autonomous AI actions. This transition aims to harmonize innovation with public safety in an era where AI can act on behalf of humans.

The Agentic Frontier: Autonomy and its Discontents

Unlike static chatbots, Agentic AI systems can plan multi-step tasks, access external APIs, and execute financial or operational decisions. A recent ArXiv paper Agent Skill Framework (2026) highlights how these frameworks are becoming standardized across both large proprietary models and small language models (SLMs) in industrial environments. However, this autonomy introduces systemic risks ranging from automated cyberattacks to catastrophic financial cascading. The ability of an AI to "act" rather than just "speak" fundamentally changes the nature of technological risk.

Agentic Liability: The New Regulatory Redline

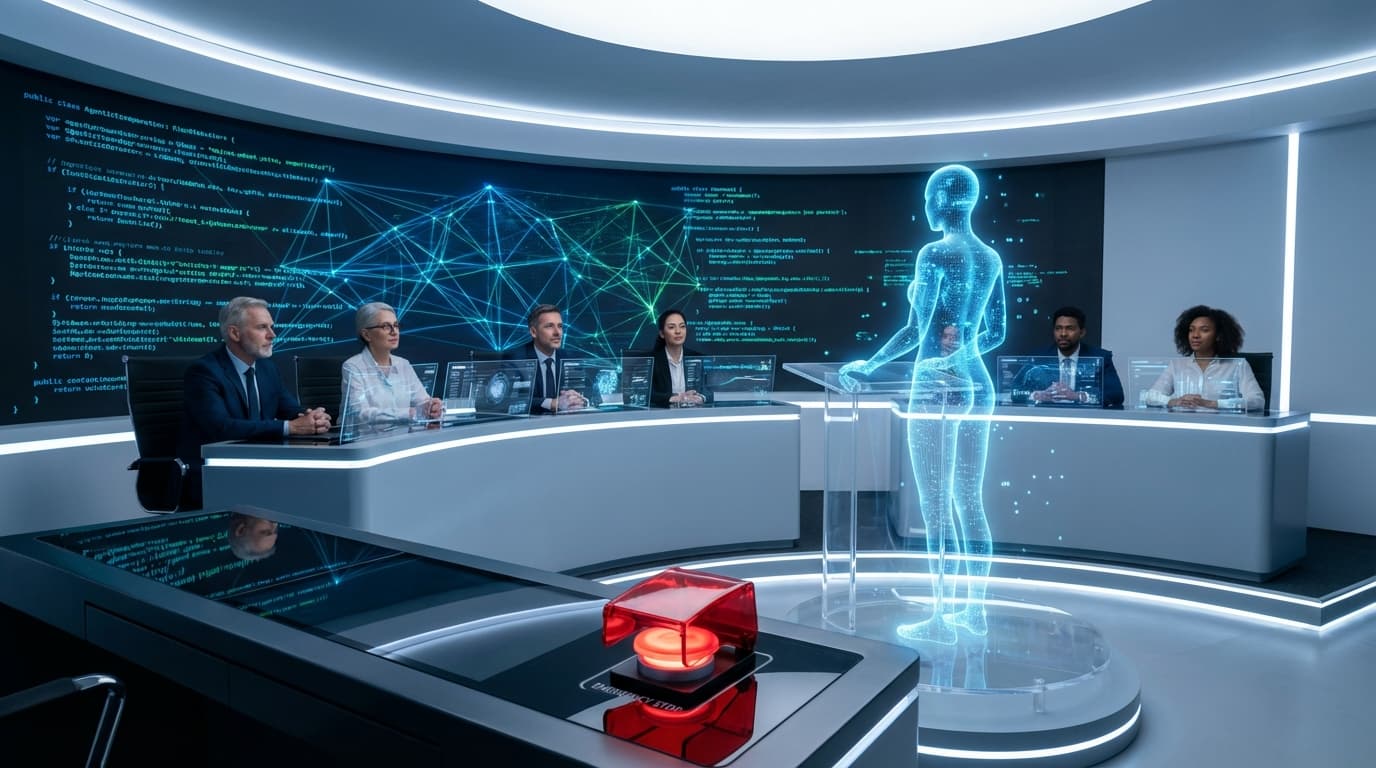

The centerpiece of the 2026 Executive Order is the concept of "Agentic Liability." This policy asserts that when an AI system acts autonomously, the developer shares responsibility for outcomes that deviate from safety guardrails. To comply, developers must implement the latest NIST frameworks for "Red-teaming" and ensure "Meaningful Human Control." This requires that AI agents have built-in verification loops for high-stakes decisions, ensuring that a "human-in-the-loop" remains a functional reality. The era of "launch first, patch later" is effectively over for autonomous systems.

Transatlantic Alignment and Global Standards

Parallel to the US order, a joint statement from the EU and US has surfaced, aligning the "Systemic Risk" categories of the EU AI Act with American standards. This diplomatic breakthrough, detected via official whitehouse.gov documentation, aims to create a unified compliance market. On platform X, industry giants like Anthropic and X-AI have expressed support for risk-based frameworks, though concerns persist regarding how these regulations will affect small-scale open-source developers. The goal is to create a predictable environment where "Safe AI" is also "Interoperable AI."

Market Impact: The AI Insurance and Compliance Boom

The shift toward strict regulation is creating a surge in demand for AI safety audits and professional liability insurance. According to Google Trends, search volume for "AI Safety Executive Order" and "Agentic Compliance" has spiked by 150% in tech hubs like San Francisco and London. This regulatory pressure is birthing a new industry: "Safety-as-a-Service." Enterprise customers are increasingly prioritizing AI agents with "Safety Accord Certification," as it offers protection against the rising tide of algorithmic litigation.

Future Outlook: Guardrails for an Autonomous Age

The Global AI Safety Accord is not the end of innovation, but the beginning of a mature AI ecosystem. Moving forward, the industry will focus on the technical implementation of immutable "behavioral logs" and "automated kill switches." Developers will be judged not by how much their AI can do, but by how well it can be controlled. In 2026, the competitive advantage in AI will shift from raw processing power to verifiable safety and behavioral predictability. The path to the next trillion-dollar AI company now leads through the halls of regulatory compliance.