Tech Frontline Jason··2 min read

Jason··2 min read

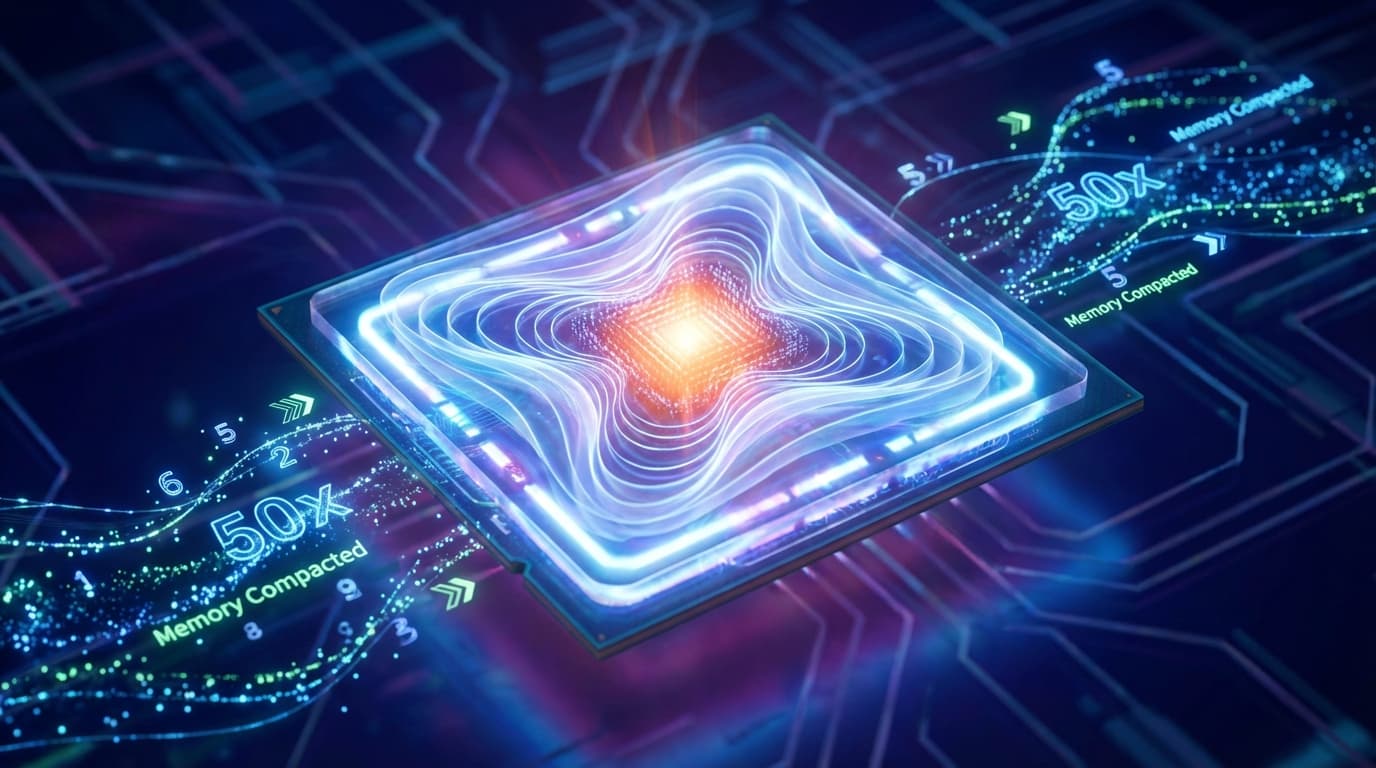

The March of Nines: MIT Breakthrough in KV Cache Compression Cuts LLM Memory Usage by 50x

MIT researchers have developed 'Attention Matching,' a technique that slashes LLM KV cache memory usage by 50x without sacrificing accuracy. Coupled with Andrej Karpathy's emphasis on the 'March of Nines' for reliability, this breakthrough signals a major step toward making high-performance AI deployment affordable and stable for enterprise use.