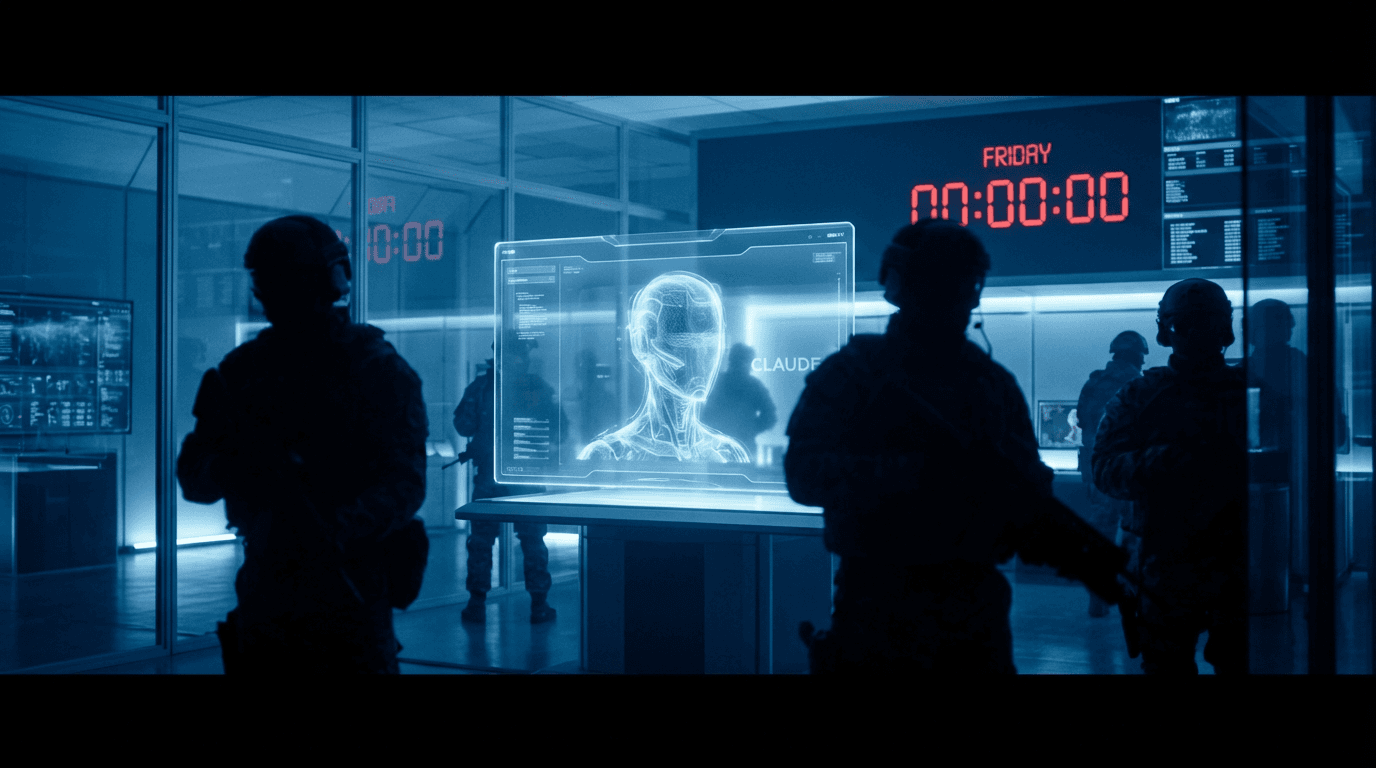

Anthropic Faces Pentagon Ultimatum: The High-Stakes Clash Over AI Guardrails and Military Autonomy

The Friday Deadline: Anthropic and the Pentagon's Standoff

At the intersection of artificial intelligence and national security, a high-stakes legal and technical battle is reaching a fever pitch. According to reports from BBC Tech (2026) and TechCrunch (2026), the U.S. Pentagon has delivered an ultimatum to AI pioneer Anthropic, demanding the company loosen the safety guardrails on its Claude models by Friday or face severe penalties and the potential loss of defense contracts.

The heart of the dispute lies in Anthropic’s rigid "red lines," which prohibit its AI from assisting in direct military kinetic operations or weaponized decision-making. The Department of Defense (DoD), however, argues that these restrictions hamper the utility of AI in critical areas like electronic warfare simulation, real-time threat intelligence, and logistical optimization. This clash represents a pivotal shift from academic debates about "AI alignment" to raw geopolitical power dynamics.

Claude Cowork: Capturing the Enterprise While Defending Safety

Despite the looming shadow of the Pentagon’s deadline, Anthropic is aggressively expanding its commercial footprint. The company recently unveiled Claude Cowork, an enterprise-grade agent platform designed to automate complex, multi-step business processes. As reported by VentureBeat (2026), Kate Jensen, Anthropic’s Head of Americas, critiqued the 2025 AI hype as "premature," noting that many pilots failed to reach actual production.

Claude Cowork is Anthropic’s bid to move beyond the chatbot era into functional agency. One of its standout features is its ability to analyze legacy COBOL code and translate it into modern languages like Java—a direct challenge to IBM’s long-standing dominance in mainframe modernization. Ironically, while Anthropic markets these agents as highly "controllable" and "safe" for corporations, the military is demanding the exact opposite: less defense, more versatility.

Legal Tension: DPA and the Balance of Power

Legal analysts suggest the Pentagon is prepared to leverage significant executive power. According to The Verge (2026), the standoff likely involves the Defense Production Act (DPA) and Executive Order 14110, which mandates that developers of powerful "dual-use" foundation models share safety test results with the government.

For Anthropic, which was founded on the principle of "AI Safety" by former OpenAI executives, this is an existential crisis. Succumbing to the DoD could alienate its core user base of developers and researchers who value ethical constraints. Refusal, however, could result in being blacklisted from federal procurement or facing administrative intervention under the guise of national security.

Market Trends and Societal Impact

Global interest in AI regulation remains at record highs. Google Trends data reveals that search interest for "AI" scored 75 in California and a staggering 88 in Taiwan, highlighting a deep anxiety over how these technologies will be governed. In Taiwan, specifically, trending queries for "Agentic AI" and "LLM Arena" suggest that the market is rapidly moving toward integrated, autonomous tools.

Social media reactions have been polarized. Privacy advocates fear that a "Pentagon-approved" version of Claude could eventually leak into civilian monitoring tools. Conversely, defense tech enthusiasts argue that Anthropic's guardrails are an "ideological tax" that could disadvantage the U.S. against adversaries who do not impose such ethical bottlenecks on their own AI development.

Future Outlook: The Precedent for Dual-Use AI

The outcome of the Friday deadline will set a massive precedent for the entire industry. If Anthropic blinks, it establishes that the government has the ultimate veto over AI safety protocols. If it stands firm, it may force the DoD to seek less-inhibited models from competitors like xAI or specialized defense startups.

For the enterprise sector, the standoff raises concerns about the longevity of AI partnerships. If a provider's safety policies are subject to sudden government overrides, how can a global corporation rely on them for sensitive operations? Anthropic’s challenge is to prove that its "Constitutional AI" approach can survive the most rigorous test of all: the demands of the world's most powerful military.